LLM Library

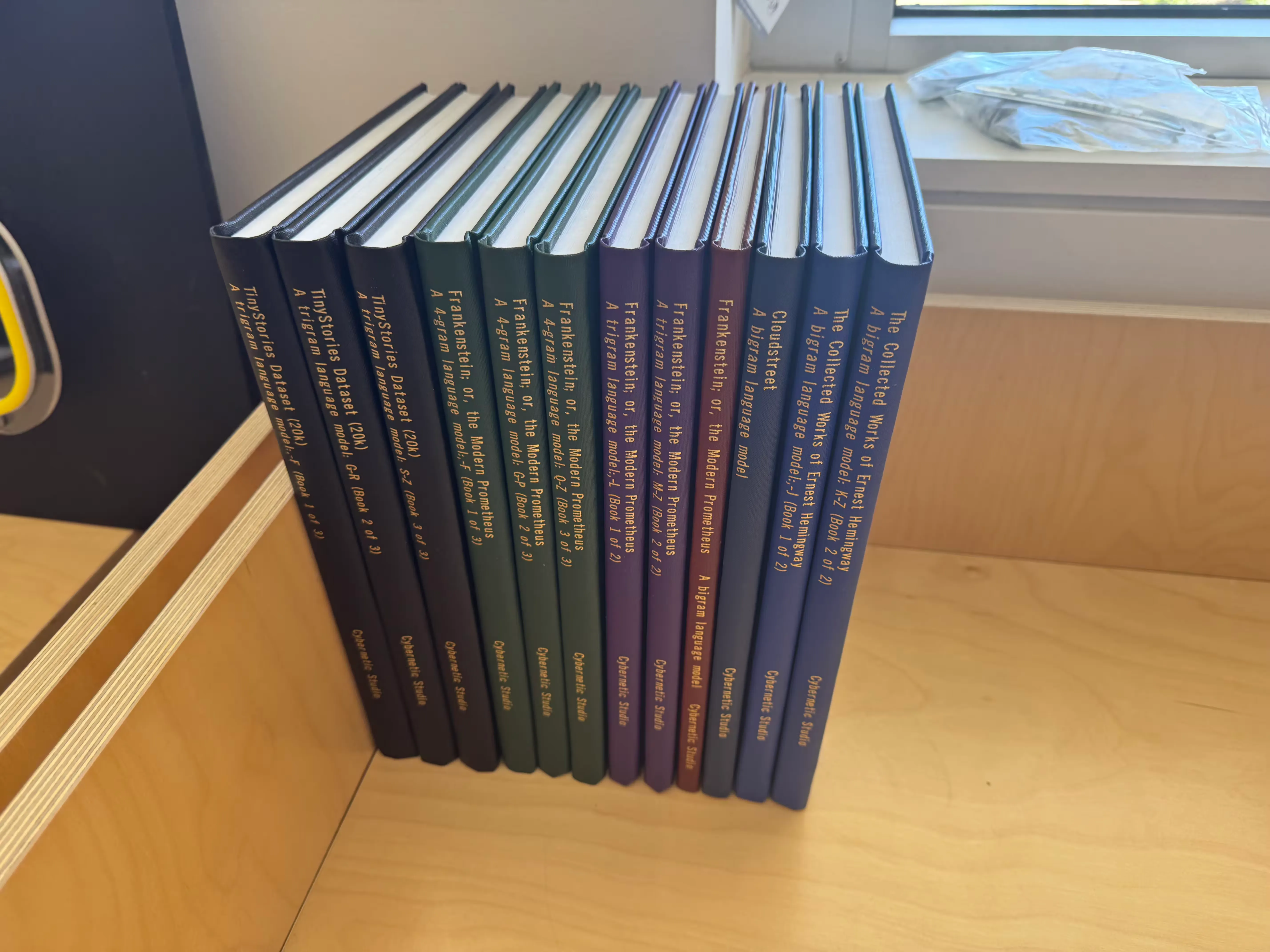

The LLM Library is a collection of pre-trained language models in printed, hardbound book form. Each volume contains n-gram frequency tables typeset from a classic work of literature — the same statistical patterns that underpin modern LLMs, but at human scale. You can hold the entire model in your hands and use it to generate new text with pen, paper, and dice.

Context

The distinctive feature is the curation. Each volume is built from a specific literary work (or the collected works of an author), then typeset in bigram, trigram, and 4-gram variants. The progression across volumes demonstrates the fundamental trade-off between model size and output quality — a trigram model of Frankenstein produces noticeably more coherent text than the bigram version, but the book is considerably thicker. Pick up a Hemingway bigram and you get terse, punchy fragments; the Cloudstreet model wanders into something more sprawling. The model is the text it was trained on, in a way that’s immediately legible.

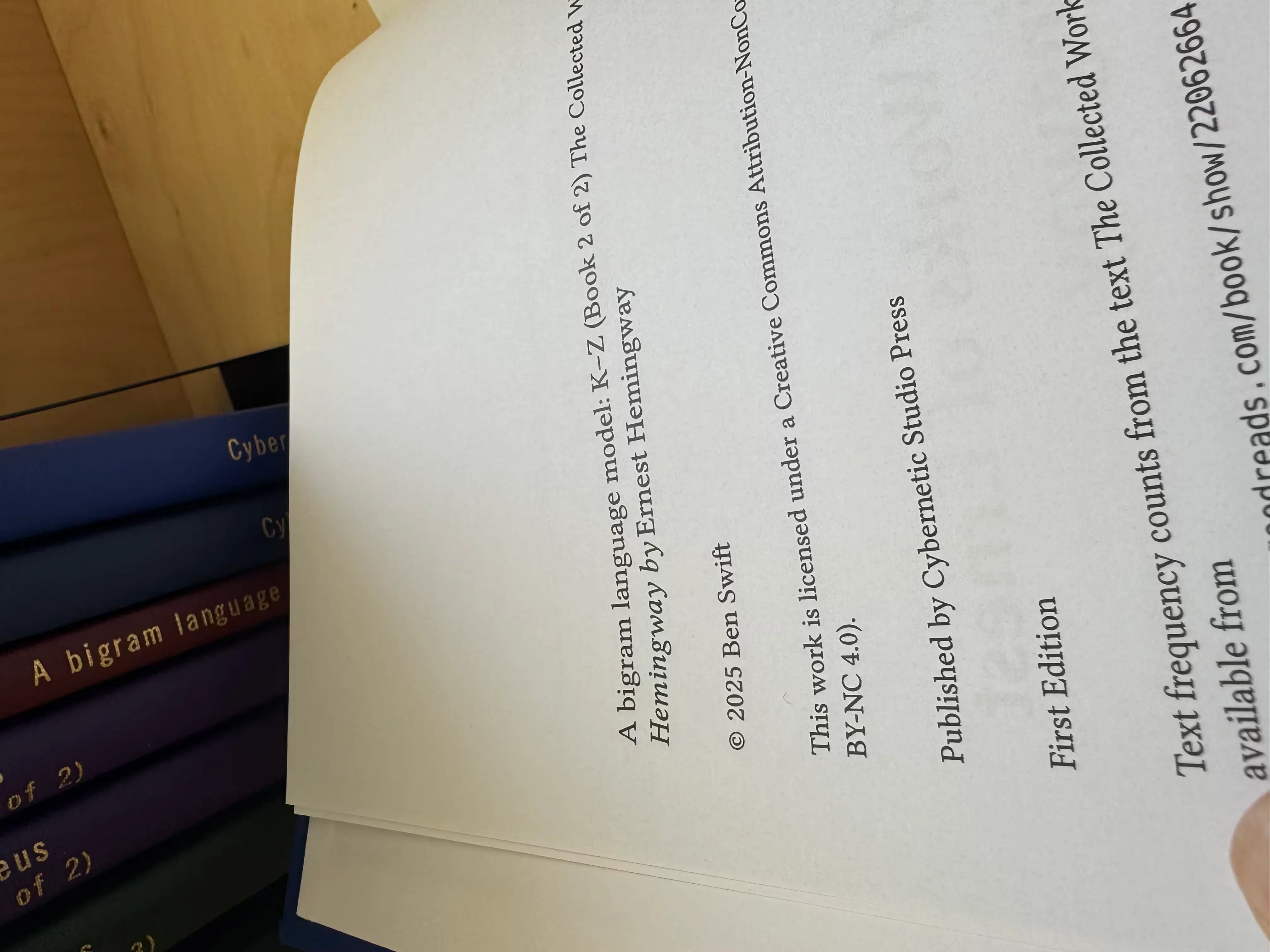

Current volumes include Mary Shelley’s Frankenstein, Tim Winton’s Cloudstreet, the collected works of Ernest Hemingway, and a synthetic dataset (TinyStories) for comparison. Published by Cybernetic Studio Press under CC BY-NC-SA 4.0.

Technical details

The booklets are typeset using the same Rust-to-JSON-to-Typst pipeline that generates the grids for LLMs Unplugged workshops. A Rust CLI tokenises the source text and computes n-gram frequency tables, which are exported as JSON and then laid out by a Typst template into A4 pages with four columns of frequency data, binding margins for hardcover production, and front matter including a copyright page and usage instructions. The Library volumes are specifically curated, printed, and hardbound as standalone artefacts rather than disposable workshop materials.